What to Look for in AI Testing Services

Introduction

Imagine launching an AI-powered chatbot that performs well in internal tests but starts giving irrelevant or inconsistent responses when real users interact with it. Over time, its accuracy slowly drops. The release appeared successful, but the risks lay beneath the surface.

Traditional testing catches fixed-code errors but misses AI-specific issues such as model drift, bias, and edge-case failures. Most AI projects encounter these risks in production, so targeted AI testing is essential to validate accuracy, performance, and real-world behavior over time.

At Global App Testing, our AI testing services focus on real-world validation, human oversight, and structured processes. The goal is comprehensive: Help teams release AI-powered products with confidence, reduce risk early, and maintain quality as systems evolve.

In this article, we will explain what to look for in AI testing services. You will find a clear buyer’s guide and a practical checklist to help you choose a partner that fits your AI goals.

What are AI testing services & why do they matter?

AI testing services focus on validating how AI systems behave in real-world conditions. Unlike traditional software testing, which checks fixed rules and expected outputs, AI testing evaluates probabilistic systems.

QA service providers such as GAT teams assess the following key areas in AI testing:

- Model accuracy and performance

- Data quality and representativeness

- Bias and fairness

- Robustness against edge cases and adversarial inputs

- Model drift over time

- Security and privacy risks

- Compliance with legal and regulatory standards

Many organizations now use AI-driven test automation within their QA processes. Medium to large enterprises report testing time reductions between 30% and 45%.

Why AI testing services matter

AI products directly shape user experience and business outcomes. If a model gives inconsistent answers, unfair decisions, or inaccurate predictions, users lose trust and companies lose revenue. At scale, internal QA teams cannot manually test every scenario, edge case, or data shift. That is why many organizations rely on specialized AI testing services to validate performance under real-world conditions before and after release.

Moreover, regulations such as the GDPR and the EU AI Act require proof of compliance and impose fines for noncompliance. Skipping structured AI testing increases the likelihood that these issues will reach production.

Comprehensive AI testing services can help to resolve these issues and deliver measurable business value. For example, 75% of organizations report reduced testing costs after using AI, and 80% report improved defect detection.

Key criteria for evaluating AI testing services

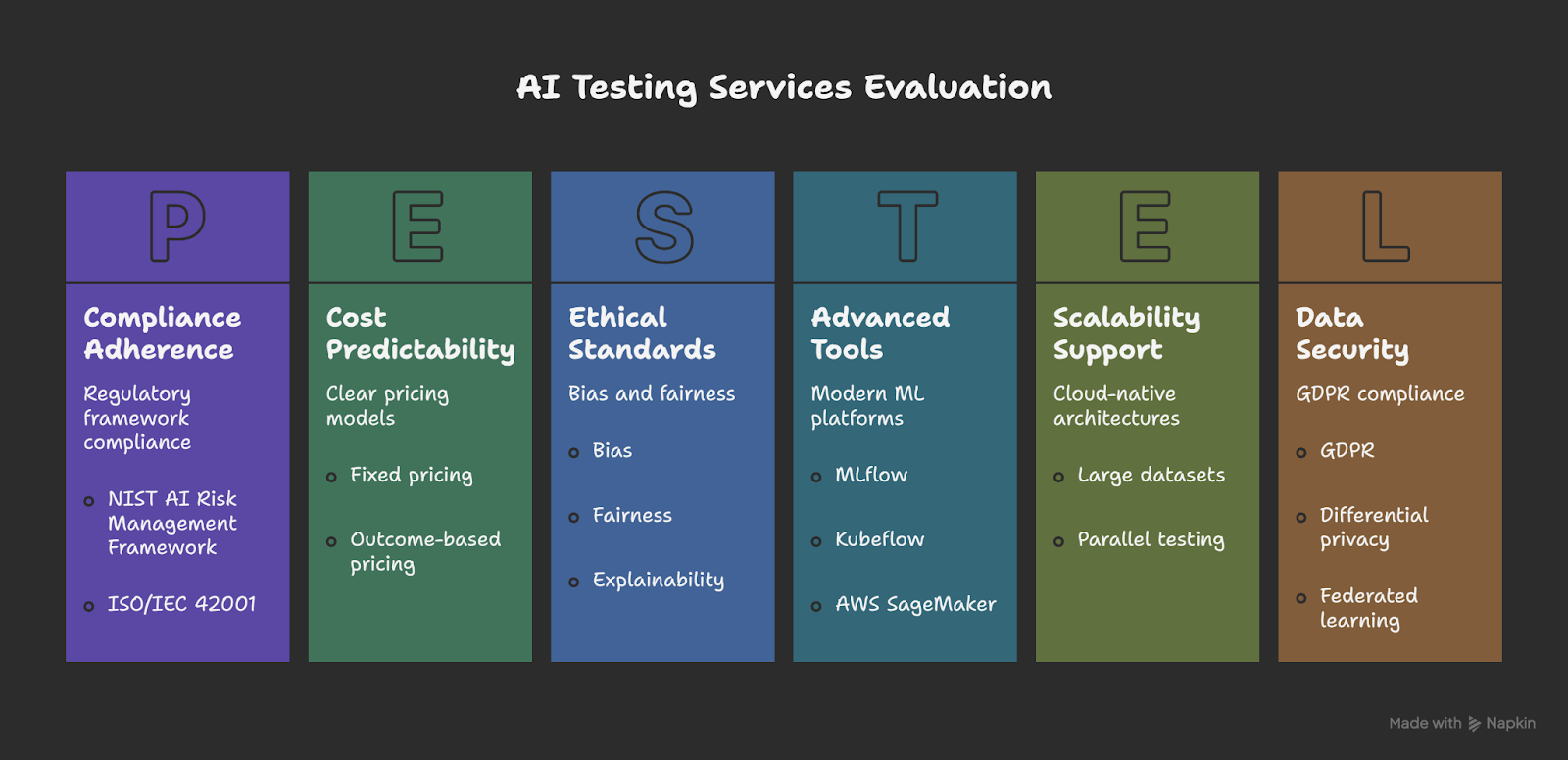

When evaluating AI testing services, it is important to understand how each aspect replaces manual testing, accelerates release velocity, and enhances model reliability.

Below are the key criteria to assess:

AI testing services evaluation

1. Proven expertise and domain experience

Choose AI testing providers with hands‑on experience in real AI systems and complex environments. Providers should be able to explain how they validate models, monitor drift, and assess real‑world behavior across different industries.

Ask them for case studies demonstrating their success with large-scale systems, such as telecom apps handling real-time data or finance tools processing user interactions. At Global App Testing, we’ve supported large, complex technical initiatives.

For example, during a major API transition for Facebook, our team helped scale test coverage dramatically. Within a single week, testers validated over 1000 apps and identified hundreds of bugs, providing actionable insights and helping ensure a smoother migration for developers.

Manual processes replaced: Manual tracking in spreadsheets and ad hoc bias checks slow validation. Automated AI testing pipelines with centralized tracking speed decisions and improve release stability.

2. Comprehensive testing methodologies

AI systems must be tested across multiple dimensions:

- Functional: Accuracy, precision, recall, F1-score

- Performance: Latency, throughput, scalability

- Robustness: Adversarial input resistance

- Ethical: Bias, fairness, and explainability

A capable provider combines automation with targeted human oversight. Automated validation ensures consistency across retraining cycles. Human review focuses on edge-case evaluation and ethical assessments.

At GAT, our QA teams combine automated testing with human review. We run regression tests for every retrained model and use crowdtesting to validate real-world performance.

Manual processes replaced: Relying on manual output reviews and regression tests delays releases. Automated regression pipelines validate each model version consistently, enabling faster decisions and smoother deployments.

3. Advanced tools and technology stack

AI testing services should support modern ML platforms such as MLflow or Kubeflow. Integration with cloud environments like AWS SageMaker or Azure ML ensures smooth deployment testing.

At GAT, we integrate AI validation into continuous integration and continuous delivery CI/CD pipelines so model testing runs automatically with every update. Our approach supports cloud-native environments, allowing large datasets and multiple environments to be tested in parallel across real devices and global locations.

Manual processes replaced: Manual script runs and log reviews slow down testing. Embedding automated validation in CI/CD provides continuous coverage and lower rollback risk.

4. Managed services and support model

AI systems require continuous monitoring after deployment. A strong managed AI testing provider offers full-lifecycle coverage, including data validation, deployment checks, and post-release monitoring.

For instance, Global App Testing, offers both AI testing models:

- Fully managed: We handle end-to-end AI validation, from data preparation and model testing to post-release monitoring and reporting.

- Semi-managed: We collaborate with internal teams, extending their QA capabilities with our global testing network and structured AI validation frameworks.

We also offer full-lifecycle coverage, including data validation, deployment checks, and continuous monitoring.

Clear pricing models are also important. Fixed pricing provides predictability. Outcome-based pricing aligns testing performance with business results. Building in-house AI testing expertise takes time. Managing it at scale takes even longer.

Manual efforts replaced: In-house teams often track models manually and fix issues after users report them. Managed AI testing adds proactive monitoring and automated alerts, boosting release confidence and reducing downtime.

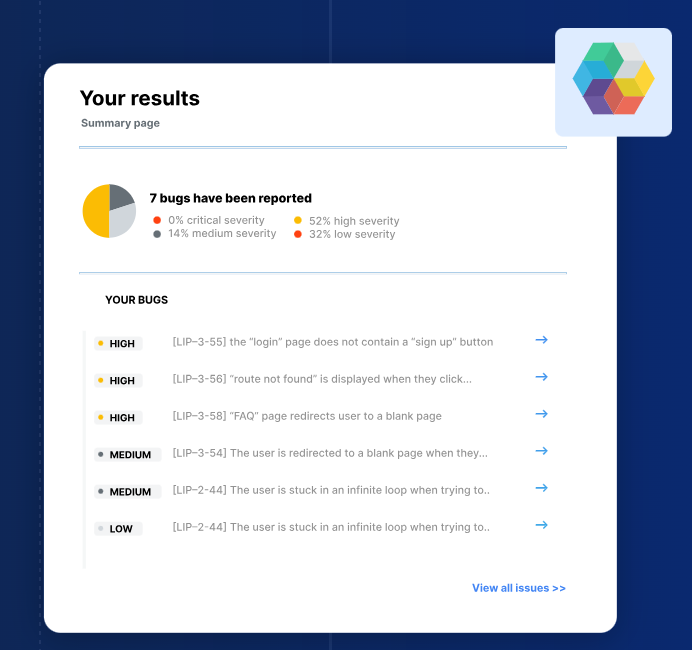

5. Client success metrics and reporting

A reliable AI testing partner should provide measurable results. Providers should track:

- Defect detection rates

- Model accuracy improvements

- Test cycle reduction

- Release stability

At Global App Testing, we deliver real-time results and detailed reporting that show these metrics in context. Our dashboard gives immediate visibility into test case outcomes and environmental coverage, so teams can quickly understand where problems occur and how to address them. We can also integrate results with your existing tools, like Jira or TestRail, for seamless workflow integration.

Manual efforts replaced: Manual KPI tracking through spreadsheets delays insights. Automated dashboards deliver immediate visibility. Faster insights lead to faster decisions and smoother releases.

Questions to ask before choosing an AI testing provider

Vendor websites will all claim “AI expertise.” What separates a capable AI testing partner from a generic QA provider is how they answer detailed technical questions. The goal is to uncover process maturity, automation depth, and lifecycle ownership.

Stakeholders can use the questions below to pressure-test real capability.

1. What AI models and frameworks do you support?

A strong provider should support ML and deep learning models, LLMs, and generative AI systems, computer vision, natural language processing (NLP) pipelines, and recommender or real-time decision systems.

Ask about frameworks such as TensorFlow, PyTorch, Scikit-learn, and cloud platforms like AWS SageMaker or Azure ML. The provider should test full pipelines, including preprocessing, feature stores, and deployment workflows. Limited framework support creates integration gaps and slows releases.

At GAT, we validate AI applications across a wide range of models and frameworks, ensuring end-to-end testing from preprocessing to deployment while supporting real-world device and environment coverage.

2. How do you test for bias and fairness?

Bias testing must be measurable. Ask which fairness metrics they apply, such as demographic parity or equalized odds. Providers should be able to perform dataset distribution analysis and cohort-level error analysis to detect uneven performance across user groups.

For LLMs, ask about hallucination testing, toxicity checks, and adversarial prompt validation. A red flag is when a provider relies solely on basic accuracy metrics without structured stress testing to detect harmful or unsafe outputs. You need structured reporting that supports regulatory compliance and internal governance.

Our diverse global team at GAT enables cohort fairness testing with structured reports on usability and edge cases for compliance.

3. Do you offer managed AI testing services?

AI validation must continue after deployment. Confirm they provide:

- Pre-release model validation

- Drift detection

- Continuous regression testing

- Production monitoring

Managed services reduce internal overhead and prevent silent model degradation. At Global App Testing, we deliver fully managed end-to-end testing, coordinating execution and monitoring to cut internal overhead.

4. How do you handle model updates and retraining?

Model updates require automated regression testing. Ask about:

- Version-to-version performance comparison

- Statistical significance testing

- Drift monitoring

- CI/CD integration

Validation should trigger automatically during retraining cycles. Manual review slows release velocity and increases risk. At GAT, our rapid regression testing via crowdsourcing and automation supports reliable CI/CD.

5. What reporting and insights do you provide?

Reporting should serve both engineering teams and executive stakeholders. Request clarity on KPIs and dashboards. Look for:

- Accuracy and error-rate tracking

- Drift indicators

- Bias metrics

- Real-time monitoring dashboards

- Compliance-ready audit logs

Pause when reporting is limited to basic pass/fail results or static PDF summaries without trend analysis or real-time visibility. This often means monitoring is not integrated into the MLOps pipeline. At GAT, we provide real-time dashboards that give teams immediate visibility into test coverage and results, enabling faster, more informed decisions.

This checklist ensures your provider can validate models effectively, reduce manual testing, and accelerate deployment.

Ready to supercharge your AI releases with GAT?

AI systems fail when testing is shallow, disconnected from MLOps, or limited to pre-release checks. Strong AI testing services take a more structured and mature approach. They validate data quality before model training begins and benchmark model performance against production baselines.

At Global App Testing, we combine AI-driven validation with real-world testing on real devices. With real-device validation across 190+ countries and up to 50% faster release cycles, GAT helps enterprises test ML models, generative AI systems, and intelligent applications at scale without adding internal QA overhead.

Book a demo with Global App Testing today and strengthen every AI release before it reaches production.

Looking to understand your global product experiences?

We work with amazing software businesses on understanding global UX and quality. If that's something you'd like to talk about, click the link and speak to one of our expert advisors.