Common challenges teams face when adopting AI testing

Introduction

AI testing is now part of enterprise QA strategy, but adoption is rarely seamless. While AI accelerates coverage and analysis, many teams face integration friction, siloed processes, and limited trust in automated results.

In 2026, the real challenge isn’t deploying AI, it’s operationalizing it. Tools must align with workflows, outputs must be explainable, and insights must translate into production-ready decisions. Without that alignment, speed gains don’t equal release confidence.

This blog outlines the key challenges of AI-driven testing and practical strategies to make it deliver measurable, real-world impact.

Defining success in AI testing

At Global App Testing, success in AI testing is defined by measurable impact across user experience, defect trends, post-launch performance data, and overall business outcomes.

Here are a few key metrics our teams use to define success:

- High-priority defect count post-production

- Production-blocking defect detection rate before release

- Release decision confidence score (stakeholder sign-off without rework)

- False positive rate in AI-generated defect reports

- Number of hotfixes required in production

- Average time to ship releases without quality-related delays

The goal of testing is to reveal real risks, direct effort efficiently, and support confident release decisions. Comparing common assumptions with the outcomes of effective testing clarifies its true impact.

What AI testing success really looks like

Clarifying success in AI testing upfront helps teams focus on results that strengthen release confidence rather than on counting automated activity. Effective AI testing measures how well it uncovers meaningful risk, not by execution volume or raw defect counts.

AI insights are most valuable when they help teams understand real risks early, so they can move forward with confidence before release.

At Global App Testing, we see AI testing succeed when teams align expectations with measurable impact:

|

Common assumption |

What effective testing delivers |

|

More automated executions mean better coverage |

Focused insights with a higher signal-to-noise ratio |

|

Testing can replace manual effort |

Testing enhances human expertise and judgment |

|

Detecting more defects automatically means better quality |

Prioritization of defects based on real user impact |

|

High accuracy in controlled environments guarantees success |

Validation in real-world scenarios ensures reliability |

|

Faster execution is the primary goal |

Stronger, more confident release decisions |

Focusing on impact rather than activity ensures that testing drives real value. Metrics alone don’t guarantee quality. Without complete and representative data, teams risk missing critical issues before release

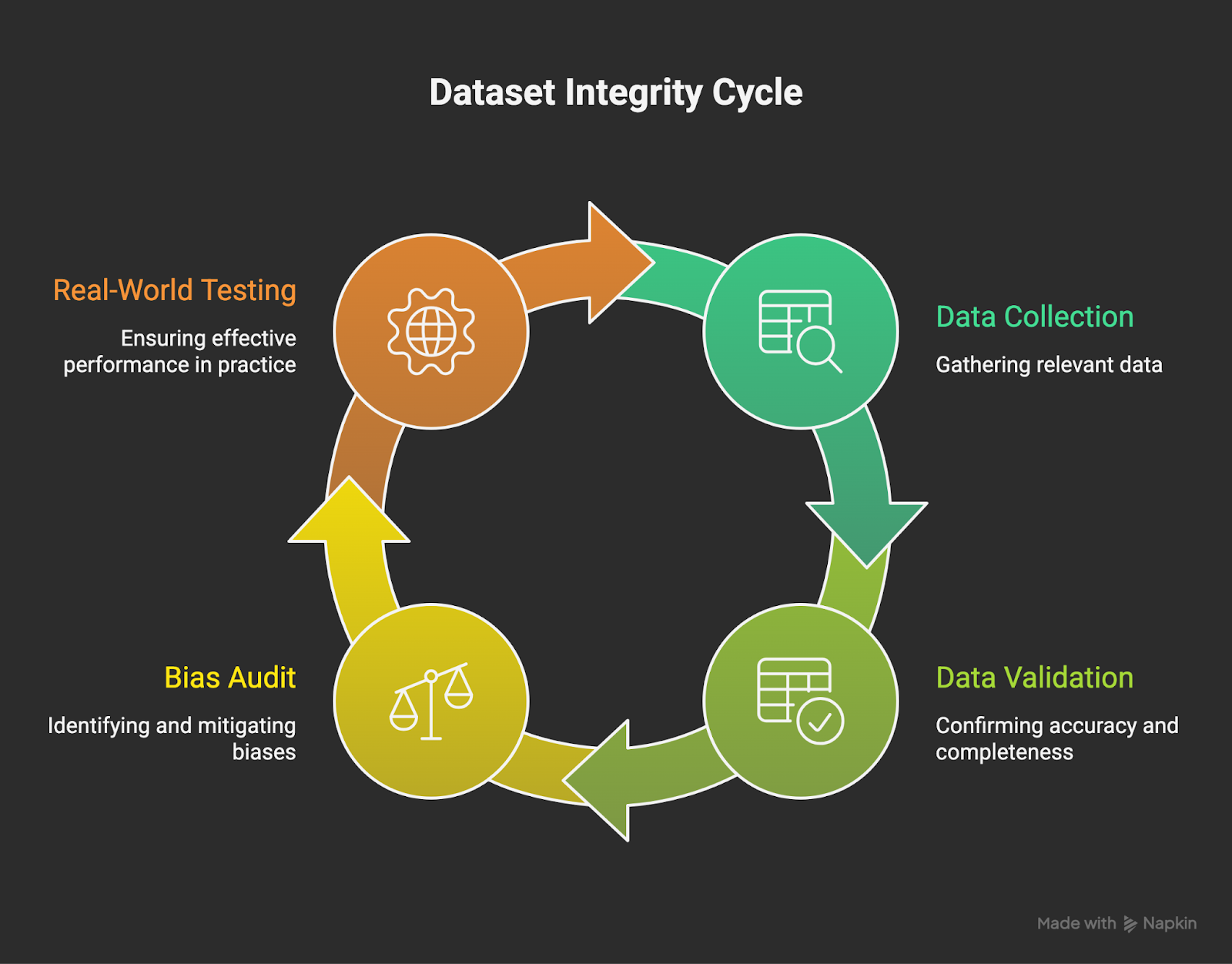

Challenge 1: Data quality and accessibility

AI testing quality depends on accurate, representative data; missing or biased data can hide critical issues, including performance on older devices. Similarly, datasets lacking regional diversity can overlook localization or language inconsistencies.

Internal tests may appear successful, yet diverse real-world users often reveal hidden issues.

Practical examples:

- Internal testing may look solid, but actual users reveal defects on specific devices or in certain regions.

- Regression cycles pass in controlled environments but fail under real-world usage conditions.

Data Quality Impact Flow

How engineering leads can overcome these challenges in AI testing:

- Implement disciplined data governance and review processes for AI testing datasets.

- Continuously audit AI testing datasets for bias and coverage gaps.

- Incorporate anonymized user behavior data to improve AI testing accuracy.

- Validate AI testing insights using AI tools in production-like environments.

By combining AI-driven analysis with real-device testing across multiple markets, Global App Testing helped LiveSafe validate critical flows, uncover hidden issues, and align cross-functional teams on quality goals.

Teams working with Global App Testing and airportr expanded real-device testing, resolved overlooked issues faster, and built confidence before tackling expertise gaps. These results highlight how teams can overcome data challenges before facing the next hurdle: limited AI testing expertise.

Challenge 2: Limited AI testing expertise

Engineers are measured on productivity and quality, not experimentation with new AI tools, which discourages teams from adopting AI testing or building expertise.

Additionally, if tools are not well-integrated in existing workflows, it impacts the developer productivity negatively.

In our experience, teams overcome this challenge by adopting following practices:

- Upskilling QA teams on testing methodologies and limitations of AI models

- Encouraging structured collaboration between QA and data teams

- Defining internal frameworks for interpreting AI testing findings

- Using hybrid human–machine validation for business-critical workflows

- Leveraging crowdtesting to validate AI insights across real devices, networks, and user conditions

For example, Carry1st improved its checkout experience by aligning automated signals with human-interpreted insights, reducing friction and increasing transaction success.

Challenge 3: Integration with existing QA workflows

Many enterprises buy AI products to enhance development and testing quality, but teams are often reluctant to use them. This happens for several reasons:

- Context switching or poor integration: If tools require moving between IDEs like VS Code, or exporting/importing test cases to generate AI scripts, teams often see these steps as extra work and may prefer manual processes.

- Skepticism about outputs: Developers and QA teams may doubt AI-generated test cases, auto-generated code, or black box outputs.

- Unclear reliability: Teams hesitate when they don’t understand how AI works, cannot verify its accuracy, or encounter hallucinations in edge cases.

AI insights deliver value only when fully integrated into development and QA workflows, preventing duplication and misalignment. Accuracy alone isn’t sufficient; real impact comes from embedding insights directly into QA execution and decision-making.

To resolve this challenge, teams check following items in AI tools:

- Define clear pilot scopes before scaling AI tools.

- Embed AI testing outputs directly into CI/CD pipelines.

- Align reporting with existing QA dashboards.

- Avoid introducing redundant tools that fragment workflows.

When AI insights feed directly into development workflows, they accelerate delivery rather than causing disruption. Proper integration ensures adoption supports agility, strengthens collaboration, and improves overall release quality.

Challenge 4: Trust, explainability, and decision confidence

Teams often hesitate to rely on AI outputs when the reasoning behind flagged defects is not clearly visible:

- Unclear reasoning: Teams are less confident when AI reports provide no rationale for flagged issues.

- Black box behavior: AI-generated scripts or defects are harder to trust when their reasoning isn’t visible

- Fear of missed risks: Blindly following AI suggestions in critical workflows like payments feels risky.

- Low decision confidence: Even accurate insights may seem advisory if relevance or risk isn’t clear.

Global App Testing helped Booking.com reduce QA time by 70% by prioritizing defects based on real user impact and providing transparent AI insights, boosting stakeholder confidence and ensuring reliable releases.

Building Trust Through Validation

Below is how engineering leads can overcome these challenges:

- Guide teams on proper AI tool usage within workflows.

- Explain AI outputs and their business relevance.

- Support training to help teams interpret AI insights confidently.

- Streamline processes to reduce friction and redundant steps.

- Choose tools with traceable reasoning and document AI-driven decisions.

- Apply hybrid validation, combining automated insights with human review for high-risk scenarios.

By pairing transparent AI insights with human judgment, teams can trust outputs, act confidently, and integrate AI testing effectively even in resistant organizational cultures.

Challenge 5: Organizational resistance and change management

When companies introduce AI tools to shift from manual to AI-driven testing, teams often face hurdles:

- Reluctance to change: QA teams may resist AI adoption when it disrupts established workflows and responsibilities.

- Lack of incentives: Engineers are typically evaluated on delivery speed and reliability, not on their adoption of new AI testing methods.

- Cross-functional misalignment: QA, development, and data teams may operate with different expectations for AI testing outcomes.

- Fear of replacement: Some teams perceive AI as a threat to their expertise rather than a tool to enhance it.

Practical example: During a Global App Testing engagement with PromoFarma, real-device and browser testing uncovered critical checkout issues, reduced duplicate reporting, and brought teams into alignment, demonstrating clear value and encouraging adoption.

How engineering leaders can overcome these issues:

- Align on business outcomes related to quality and release performance.

- Pilot specific use cases to quickly demonstrate the value of AI testing.

- Align AI testing metrics with existing release and QA metrics.

- Frame AI testing tools as an augmentation of human expertise, not a replacement.

- Point to early successes to build momentum.

Once teams see the benefits and alignment, measuring clear success metrics ensures AI adoption drives lasting impact rather than just short-term improvements.

Measuring success in AI testing adoption

AI testing delivers real value when measurable improvements, such as reduced defect escape rates and stronger release confidence,translate into higher quality across devices and regions

Key indicators of effective AI testing adoption include:

- Fewer false positives from AI-generated insights

- Defects prioritized by real business and user impact

- Faster detection of production-blocking issues

- Greater confidence among stakeholders in release readiness

Teams achieve effective adoption by applying clear insights and making high-quality decisions, which enable confident releases. Clear definitions of success set the stage for adopting best practices that ensure insights consistently drive actionable, reliable outcomes.

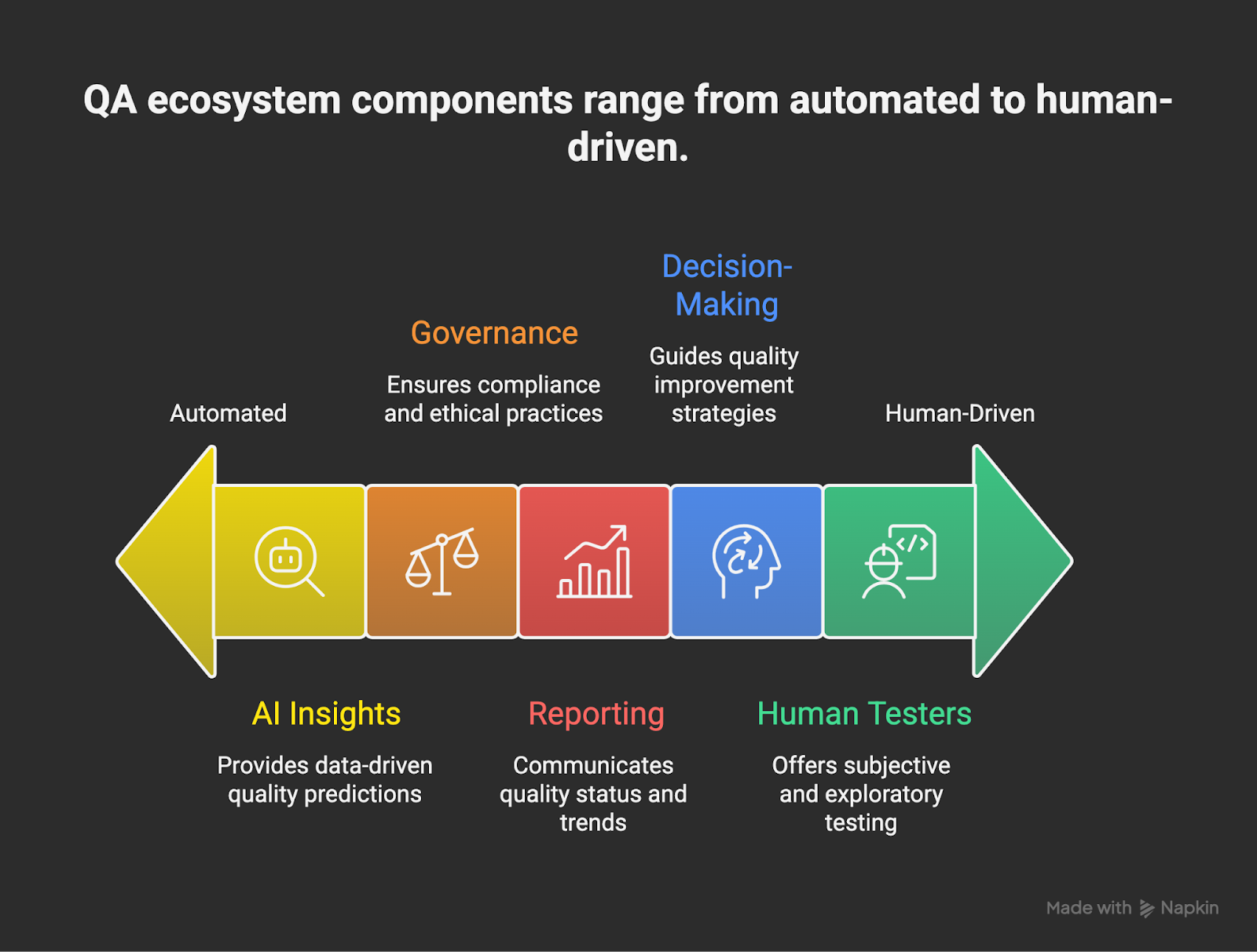

Best practices for sustainable AI testing adoption

Teams get the full value of testing by combining organized processes, aligned objectives, and real-world validation, with human judgment guiding every step.

Integrated QA Ecosystem

Follow these tested approaches from Global App Testing to enhance AI testing and ensure consistent, dependable outcomes:

- Use AI to support decisions while retaining human oversight.

- Test outputs in real-world conditions to confirm release readiness.

- Continuously improve data quality to protect the accuracy of insights.

- Apply findings to strengthen governance and strategic planning.

- Involve skilled testers to validate critical workflows.

- Embed AI within a connected quality ecosystem to maximize impact.

For example, Acasa reduced app crash rates and improved NPS by combining structured QA with real user behavior validation across devices and user contexts.

Sustainable implementation requires measurable impact on defect escape rates and release confidence.

Key takeaways

The key to successful testing is impact, not volume. Solving AI testing challenges requires focusing on insights that identify key risks, inform release decisions, and ensure software reliability across devices and regions.

Effective QA strategies combine real-world testing with processes and knowledge to fill coverage gaps.

Work with Global App Testing to enhance your testing strategy and confidently release high-quality products.

Looking to understand your global product experiences?

We work with amazing software businesses on understanding global UX and quality. If that's something you'd like to talk about, click the link and speak to one of our expert advisors.