Scaling AI testing across large product teams

Introduction

Imagine a product team managing several AI models across multiple features, such as fraud detection and customer support chat. Each sprint brings new model updates, new data, and new releases.

Tests are running, yet every deployment still feels uncertain. When AI testing does not scale, errors reach production, performance declines, and customer trust suffers.

Scaling enterprise AI testing across large product teams is very different from testing traditional software. Traditional QA methods were built for deterministic software. AI systems differ because their outputs vary with data patterns and user behavior.

Static test cases cannot fully capture this complexity. As the number of AI features grows, manual reviews and isolated validation processes create coverage gaps and slow down releases.

At Global App Testing, we help enterprises address these challenges through structured AI testing services. Our approach combines standardized workflows, real-world crowdtesting, and managed testing expertise to validate AI systems in diverse environments. This enables teams to scale testing across products while maintaining quality and compliance.

In this article, we explore the core challenges of scaling AI testing across large product teams and outline practical frameworks to build a scalable AI testing process.

How scaling AI testing is different from traditional QA?

AI systems are fundamentally different from traditional software, which changes how companies should approach validation at scale. Unlike standard applications, where the same input always produces the same output, AI models are probabilistic and evolve over time.

For example, a recommendation engine may give slightly different suggestions for the same user depending on recent training data or model updates. This requires testing strategies that account for variability, bias, and continuous learning.

Our team at Global App Testing helped LineTen overcome testing capacity challenges across multiple integrations and international markets. With a global crowdtesting network, we increased test execution capacity by over 5x and integrated results directly into TestRail for centralized tracking and to increase validation capability.

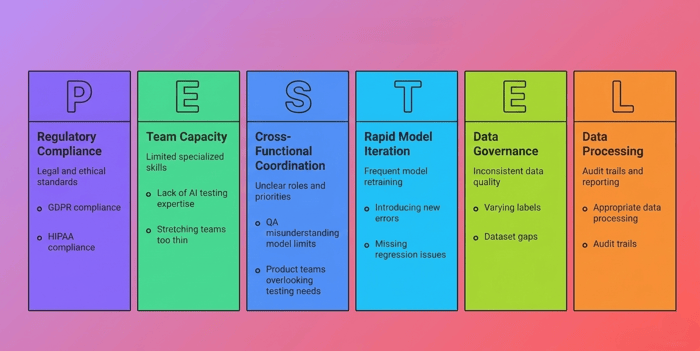

Core challenges in scaling AI testing across large product teams

Scaling AI testing across large product teams comes with unique challenges. These challenges make it hard to maintain consistent quality and keep up with rapid AI development.

Scaling AI testing challenges

Fragmented tooling and processes

Different teams often use different tools and validation methods. They use different benchmarks and metrics, such as accuracy, precision, or response time. It may result in redundancy and inconsistent performance.

Rapid model iteration

Teams frequently retrain models, update APIs, and modify datasets. These changes introduce new errors. Without automated and standardized tests, QA teams fall behind. They often miss regression issues such as performance drops, bias shifts, or unexpected outputs until users experience them.

Inconsistent data governance

Large enterprises use data from many sources with different quality levels. Labels may vary, and datasets may have gaps. Poor data governance leads to unreliable test results and makes it hard to track model performance over time or across regions.

Cross-functional coordination gaps

AI testing depends on close collaboration between data scientists, engineers, QA, and product teams. In large organizations, unclear roles and conflicting priorities create gaps. QA may not understand model limits, and product teams may overlook testing needs. This misalignment leads to delays and higher production risks.

Internal team capacity constraints

AI testing needs specialized skills like model evaluation, drift detection, and fairness testing. Many QA teams lack this expertise. Running multiple AI projects at once can also stretch teams too thin, slowing validation and releases.

Compliance and regulatory requirements

AI testing must also be in compliance with legal and ethical standards such as GDPR and HIPAA. This requires appropriate data processing, audit trails, and reporting to satisfy both regulatory authorities and internal risk management requirements.

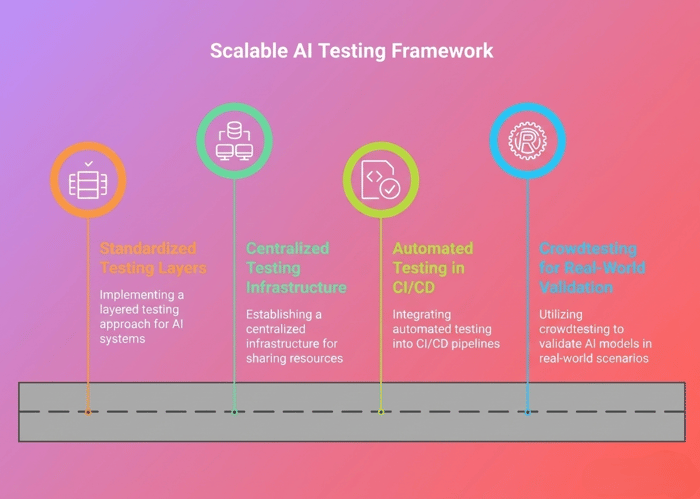

Building a scalable AI testing framework

AI testing should be structured and repeatable to support large product teams. Without it, testing becomes fragmented and error-prone.

Scaling AI testing across large product teams comes with unique challenges. These challenges make it hard to maintain consistent quality and keep up with rapid AI development.

Scaling AI testing challenges

Standardized testing layers

A layered testing approach can help to validate every aspect of the AI system before deployment. Common layers include:

- Unit testing: ML engineers validate individual model components, feature pipelines, and preprocessing logic to ensure technical correctness.

- Dataset validation: Data scientists and data engineers verify that training and test datasets are clean, balanced, representative, and free from leakage.

- Integration testing: QA and engineering teams confirm that models function correctly within applications, APIs, and deployment pipelines.

- Security testing: Security specialists test for vulnerabilities such as prompt injection, adversarial inputs, and data exposure risks.

- Human evaluation: Domain experts and QA should review outputs for bias, fairness, contextual accuracy, and unexpected behavior.

This approach can reduce blind spots and ensure AI systems are reliable in multiple environments.

Centralized testing infrastructure

Centralized infrastructure allows teams to share tools, datasets, and evaluation standards:

- Shared evaluation datasets: Provide a single source of truth for testing across multiple teams.

- Unified metrics dashboards: Combined metrics help track model performance, accuracy, drift, and compliance in one place.

- Automated regression testing: This runs tests automatically whenever there are model updates or retraining.

- Reproducibility frameworks: These frameworks test results can be replicated across teams and over time.

Centralization helps eliminate duplicated effort and improves transparency across large organizations. We at Global App Testing support enterprise teams by standardizing AI testing workflows, systems across diverse environments, and embedding scalable AI testing practices in CI/CD pipelines.

Automated testing in CI/CD

DevOps engineers and release managers integrate automated AI testing into CI/CD pipelines for continuous validation amid frequent model updates. This overview covers key benefits:

- Continuous validation for every model version

- Regression testing across updates

- Benchmarking to determine speed, resource usage, and reliability.

Teams can validate AI models more quickly and reduce deployment risk by integrating testing into CI/CD pipelines.

Crowdtesting for real-world validation

Automated tests play an important role; however, they cannot be applied to all real-life situations. Crowdtesting augments automation through a distributed group of testers to verify models with varying conditions:

- Identify edge cases that automated tests may miss

- Validate AI performance across different devices, geographies, and user environments

- Provide rapid feedback to improve models before release

Crowdtesting helps companies scale validation while keeping internal teams focused on development and core testing tasks.

Our managed crowdtesting network provides real-world validation across many environments, devices, and user contexts. Evaluate how our team at Global App Testing supported Facebook in scaling partner app validation. Our global testing community tested 5,000 apps across devices and operating systems.

This expanded validation coverage significantly while maintaining speed and quality standards.

Organizational strategies for large product teams

AI testing on a large product team requires a defined organizational framework. Most companies use a hub-and-spoke model. In this structure, a central team sets standards and governance, while individual product teams own their models and day-to-day testing. This approach balances control with flexibility.

Creating an AI testing center of excellence (CoE)

Many enterprises establish a central team responsible for AI testing standards. This team defines policies, tools, and best practices. Product teams then follow these guidelines while keeping ownership of their models. This balance allows flexibility while maintaining control.

Cross-team collaboration models

AI testing requires cooperation between data scientists, engineers, QA teams, and security experts. Companies can use a RACI matrix to define who is Responsible, Accountable, Consulted, and Informed at each stage of the AI lifecycle.

For example, data teams validate datasets, QA reviews model behavior, and engineering teams monitor production performance. Defined roles reduce confusion and delays across multiple teams and products.

Crowdtesting as a strategic capability

Crowdtesting adds human insight at scale. It helps teams:

- Capture real-world user scenarios, edge cases, and device variability.

- Test models across diverse environments without overloading internal resources.

- Feed results back into CI/CD pipelines, dashboards, and validation frameworks.

Crowdtesting becomes a strategic resource when coordinated by the CoE. It validates AI models across regions, devices, and user scenarios before release.

Large enterprises often combine internal governance models with external expertise to ensure consistency across teams. By partnering with experts like GAT, the CoE can shift the burden of global validation while maintaining strict oversight of the data.

Shared testing infrastructure

Companies can improve efficiency with centralized tools, datasets, and reusable evaluation pipelines. For large SaaS, fintech, and e-commerce enterprises, centralized monitoring dashboards can provide visibility into AI performance and reliability across all products.

When to consider specialized AI testing services

Specialized AI testing services enable internal teams to scale. This includes situations such as fragmented tooling, multiple product teams, rising compliance requirements, or the need for real-world validation.

We at GAT offer scalable testing solutions that integrate crowdtesting, automated pipelines, and structured frameworks. This ensures AI systems remain reliable and top-notch across all products.

Assess how our team at Global App Testing helped Carry1st test checkout flows across regions and devices. Real-world testing uncovered payment issues that internal tests missed, boosting local checkout completion by 12% and improving the user experience.

Scale enterprise AI testing with GAT

At Global App Testing, we support large product teams in building structured AI testing programs that grow with their model portfolio and release cycles. Our approach focuses on consistency, real-world validation, and operational alignment across teams. Our services combine:

- Crowdtesting at scale to validate AI models in real-world conditions

- Managed AI testing workflows to standardize processes across teams

- Integration with CI/CD pipelines for continuous model validation

- Centralized dashboards and reporting for transparency and compliance

Speak to us today to make sure your AI models perform reliably at scale!.

Looking to understand your global product experiences?

We work with amazing software businesses on understanding global UX and quality. If that's something you'd like to talk about, click the link and speak to one of our expert advisors.