Software Testing – What is it? Everything to Know

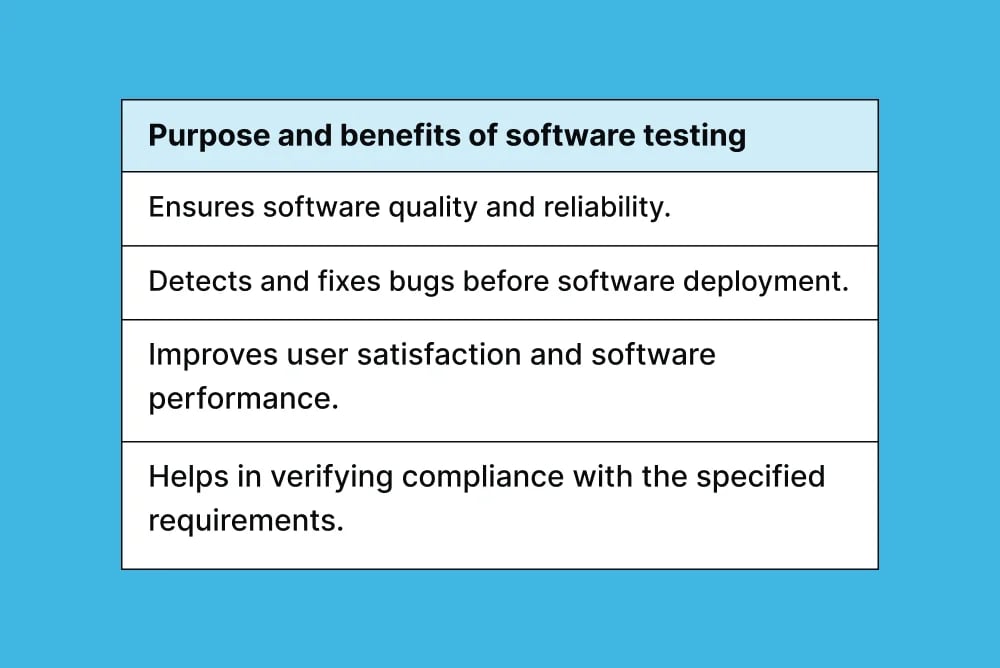

You can buy the best golf clubs money can buy, but it won't magically lower your handicap unless you know how to use them. Software testing is no different – it won't give you good results unless you know how, who, what, and when to utilize it. Software testing ensures that a software application is of the highest possible quality for users and tests a product to prevent any issues from becoming a bottleneck.

There are many ways you can approach software testing. However, it's easy to get confused by the sheer number of testing types and how they overlap, let alone what each does.

So, let's start with the basics and cover all the testing subcategories along the way!

Join our community of 70,000+ testers around the globe and earn money testing websites and apps in your free time.

What is software testing?

Software testing is a comprehensive process that ensures that software applications are reliable, secure, and user-friendly. It encompasses a range of techniques and methodologies, each targeting different aspects of software to provide a quality product. In this ultimate guide to software testing, we will cover the following:

1. Types of testing:

- Manual testing: Testers manually execute test cases without automation tools.

- Automated testing: Uses tools and scripts to automatically run test cases, increasing efficiency, especially for repetitive tasks.

2. Testing approaches:

- White Box testing: Focuses on the internal structure and logic of the code.

- Black Box testing: Evaluates the software's functionality without looking at the internal code structure.

- Grey Box testing: Combines white and black box testing elements, offering a more comprehensive approach.

3. Functional vs. Non-Functional testing:

- Functional testing: Assesses specific functions or features of the software.

- Non-Functional testing: Evaluates performance, usability, reliability, etc.

4. Stages of testing:

- Unit testing: Testing individual components or units.

- Integration testing: Testing combined application parts to ensure they work together.

- System testing: Testing the complete and integrated software.

- Acceptance testing: Final testing to ensure the software meets user requirements.

Manual Testing

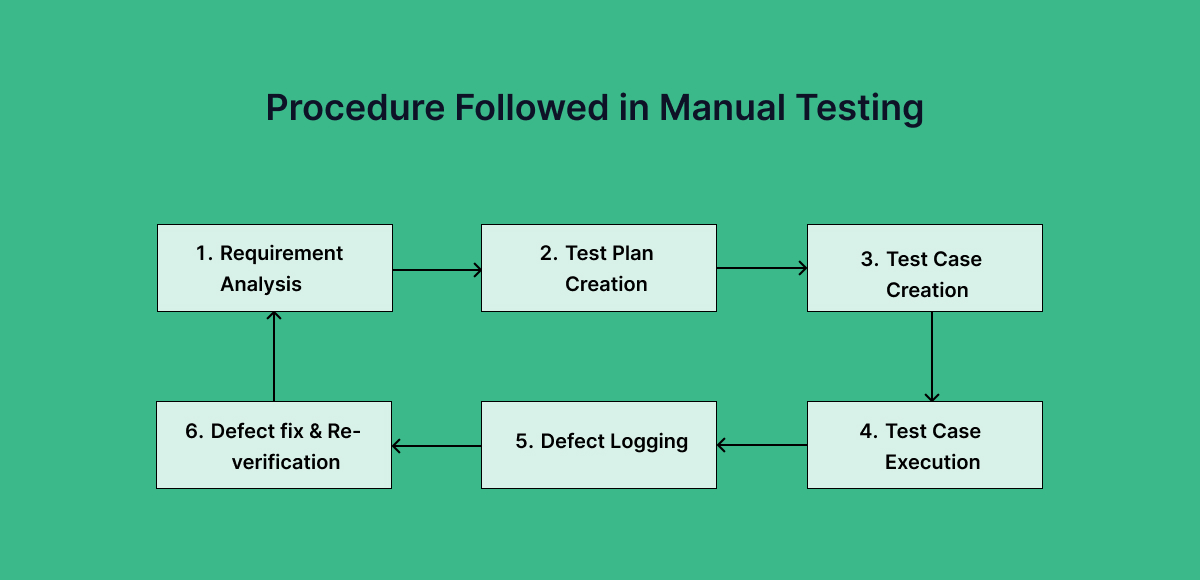

Manual testing is a process where software testers manually execute test cases without using any automation tools. They play the end-user role and try to find as many bugs in the application as quickly as possible. The bugs are collated into a report and passed to the developers to review and fix. Manual testing often focuses on usability, performance testing, and software quality assessment.

Manual testing is further divided into:

- Scripted testing, which follows predefined test cases, and

- Non-scripted testing, which includes more adaptive and investigative forms like Exploratory testing.

Automated Testing

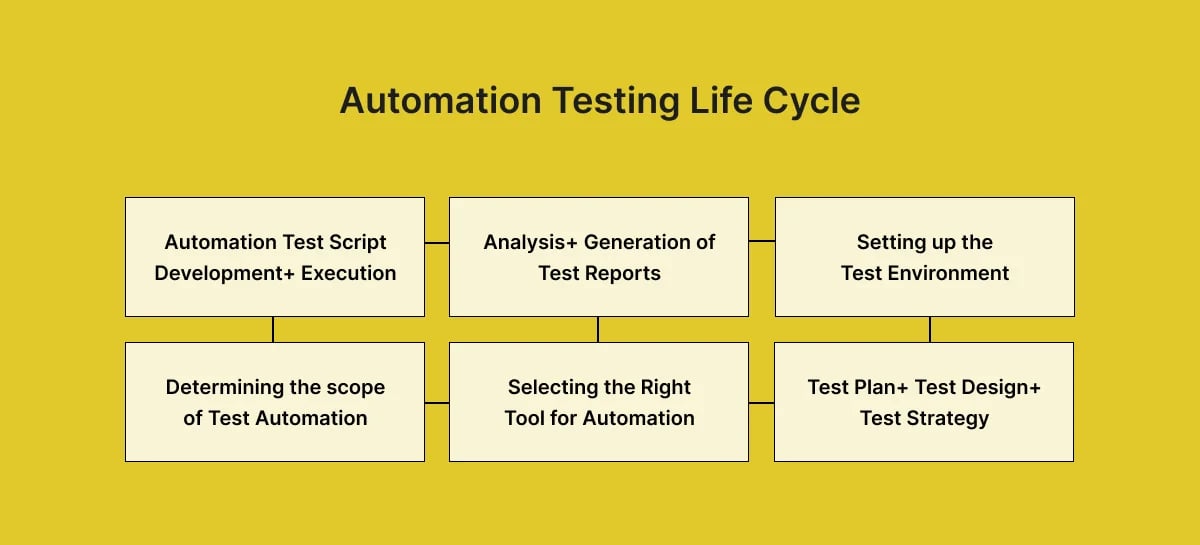

Automated testing is when an automation tool executes pre-scripted test cases. Test automation aims to simplify and increase efficiency in the testing process. If a particular form of testing consumes a large percentage of quality assurance, it could be a good candidate for automation. Acceptance testing, integration testing, and functional testing are all well-suited to this type of software test. For instance, checking login processes is an excellent example of when to use automation testing.

Automated testing Vs. Manual testing

Speed and efficiency:

- Automated testing is typically faster than manual testing.

- Valuable for accelerating the software development lifecycle.

- Increases productivity and reduces testing time for most apps/websites.

Cost considerations:

- Higher initial setup costs due to purchasing automation tools, training, and preparing tutorials.

- Offers long-term cost savings despite the initial investment.

- Regular script maintenance can be costly, especially for frequently changing apps/websites.

Suitability for different tasks:

- Ideal for repetitive tasks, reducing manual inefficiency and human error.

- It is particularly effective for stress testing and smoke testing.

- Not suitable for all testing types - user interface, documentation, installation, compatibility, and recovery tests may be better conducted manually.

- Even with automation, some manual testing is usually necessary.

What are the methods for software testing?

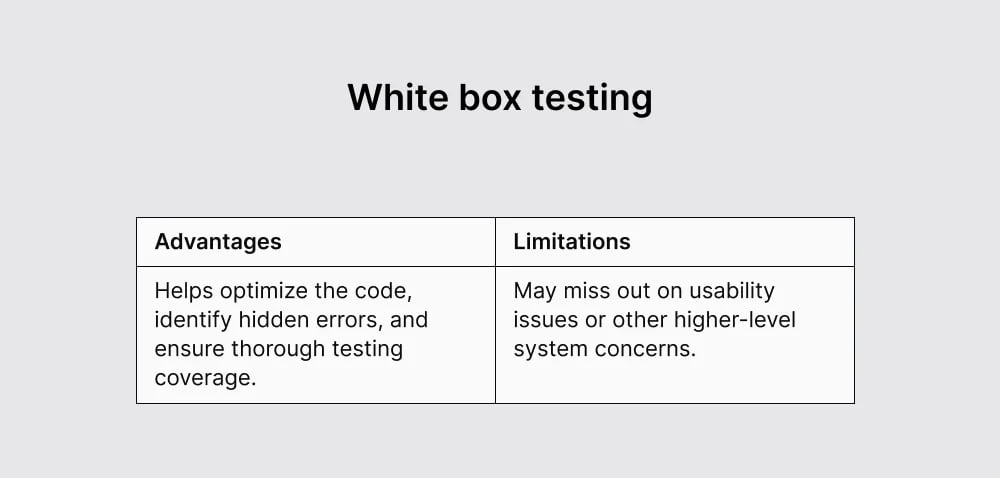

White box testing and black box testing are two fundamental approaches in software testing, each with its unique focus and methodology.

1. White Box Testing (Clear Box/Structural Testing)

White box testing involves testing the software's internal structure, design, and coding. In white box testing, the tester has complete visibility of the source code and uses this knowledge to design test cases. The main focus is on the logical flow of the software, code structure, conditions, paths, and branches.

Techniques:

- Unit testing: Testing individual units or components of the software.

- Integration testing: Testing the interfaces and interactions between integrated units/modules.

- Code coverage analysis: Ensuring that a certain percentage of the code is executed during testing.

- Static code analysis: Examining the code without executing it to find vulnerabilities or coding standards violations.

It often involves the use of automated tools and requires programming knowledge.

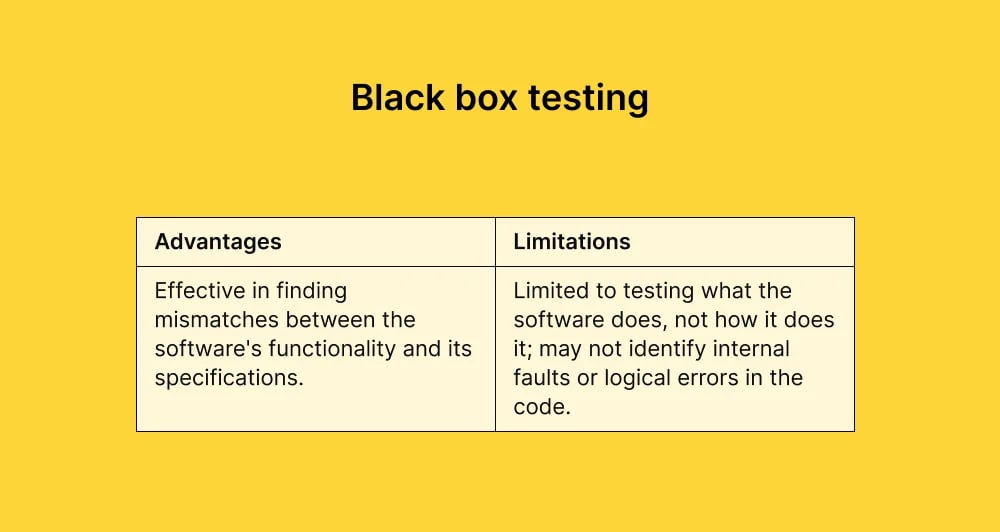

2. Black Box Testing (Functional/Behavioral Testing)

Black box testing focuses on the software's functionality without considering its internal code structure. The tester is unaware of the internal workings of the application and tests the software by checking its inputs and outputs. The primary focus is on the software's functionality, behavior, and requirements.

Techniques:

- Functional testing: Testing the application against its functional requirements.

- System testing: Testing the complete and integrated software.

- Acceptance testing: Verifying whether the software meets the end-user requirements and is ready for release.

- Usability testing: Assessing the user-friendliness and ease of use of the application.

This testing can be done manually or with automated tools but does not require knowledge of the programming language or the internal structure of the software.

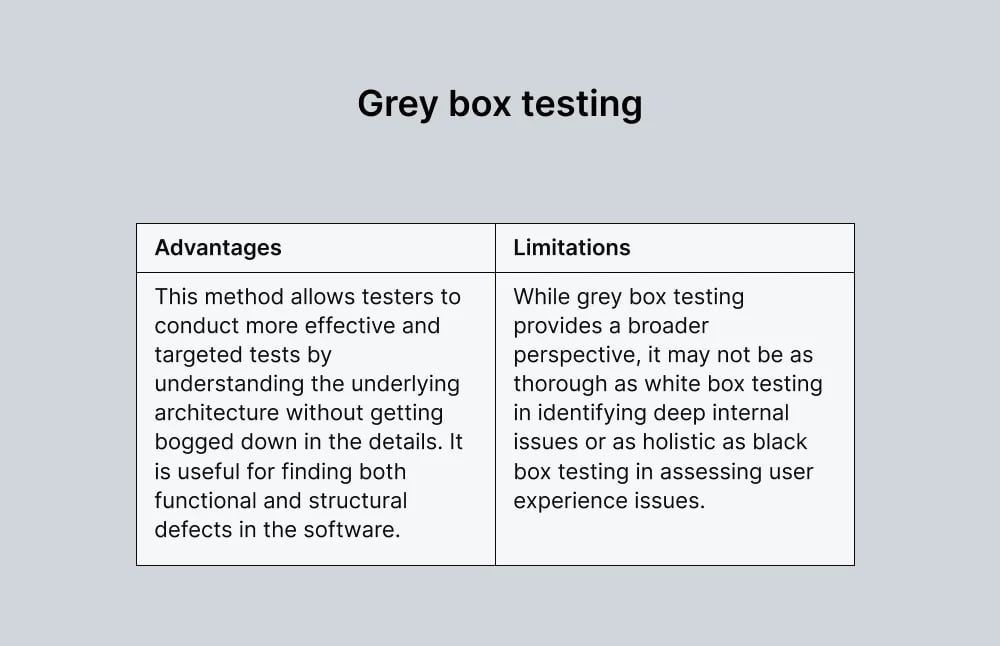

3. Grey Box Testing

Grey box testing is called that because it's seen as a middle ground between the "white" (clear, open to view) and the "black" (opaque, closed to view) testing methodologies. This approach provides a more comprehensive testing perspective by considering both the software's internal workings and external functionality.

Key characteristics:

- Unlike white box testing, where the tester has full knowledge of the internal code, grey box testing requires only partial knowledge – for example, information about algorithms, data structures, or code snippets.

- It is particularly effective in integration testing, where different components or systems are interfaced. It helps identify issues related to data flow and improper use of interfaces..

- Grey box testers may use tools like debuggers to assess how the system behaves under test and also rely on black box methods like high-level testing of functionality.

- It is often used in security testing. Testers have enough knowledge to test potential weak points in the system and simulate an external attacker with a limited understanding of the system internals.

Functional vs. Non-Functional Testing

Software testing can also be broadly categorized into two additional types:

- Functional Testing: This type assesses the quality, features, and functionality of the software code. It aims to verify that each function of the software application operates per the specified requirements.

- Non-functional Testing: This type focuses on the software application's performance and quality in real-world scenarios. It includes testing aspects like performance, scalability, reliability, and usability, which are not directly related to specific behaviors or functions of the system.

Functional testing types

Functional testing validates the software system against the functional requirements/specifications. Here are the various types of functional testing:

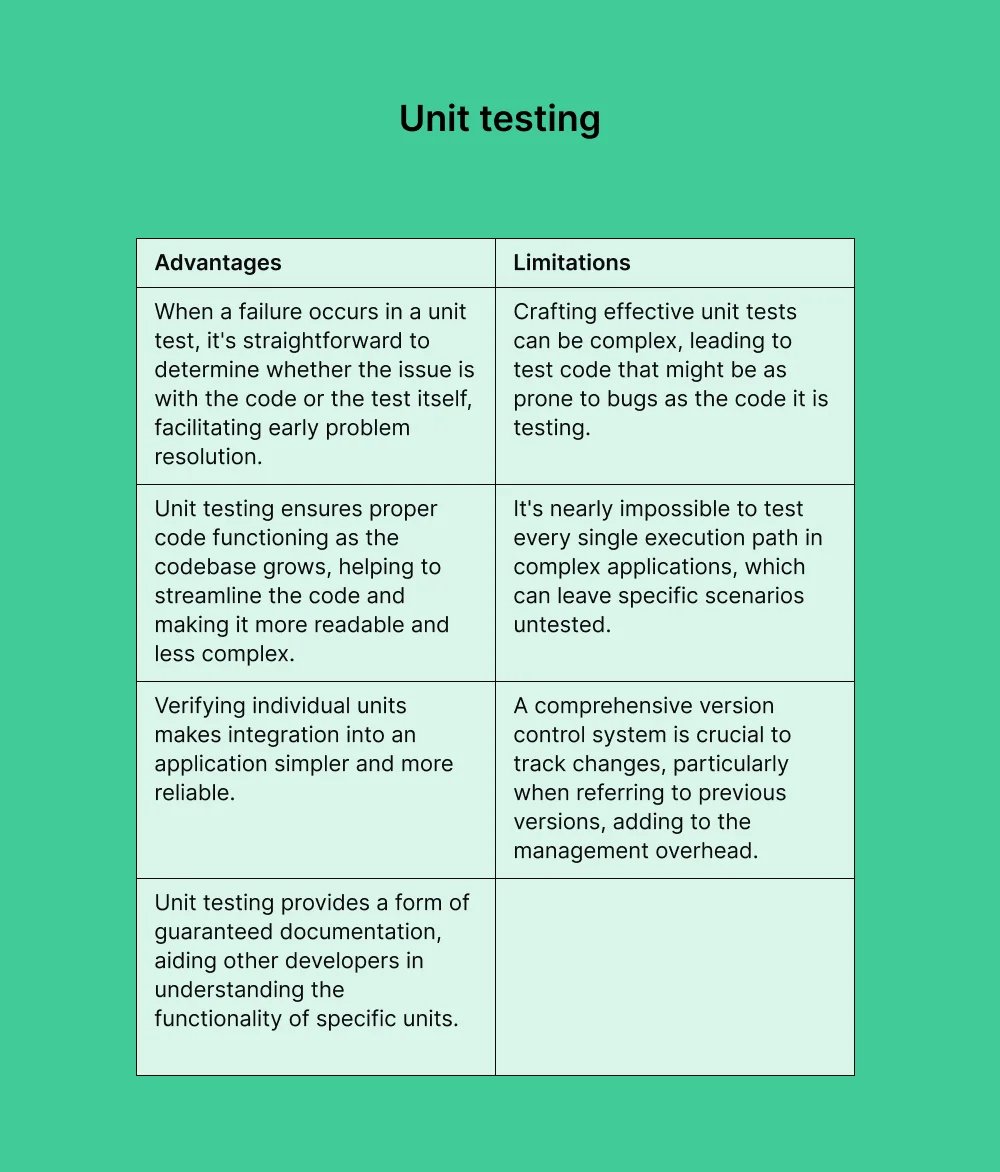

1. Unit Testing

Unit testing is testing individual units or components of an application. The aim is to ensure that each unit performs as designed. It is typically carried out by developers rather than the quality assurance team, as it requires a detailed knowledge of the internal program design and source code and belongs to the White box approach.

The techniques employed include:

- Branch coverage: This technique ensures that all possible logical paths and conditions (True and False scenarios) are tested. For instance, in an If-Then-Else statement, testing covers all possible branches, including both the 'If' and 'Then' conditions.

- Statement coverage: In this approach, every single statement within a function or module is executed at least once during the testing process to ensure full coverage.

- Boundary value analysis: This involves creating test data for both the boundary values and the values immediately adjacent to these boundaries. For example, if testing the valid days of a month, which range from 1 to 31, the testing would include these boundary values (1 and 31) and check the adjacent invalid values (0 and 32) to evaluate how the system handles such scenarios.

- Decision coverage: This technique is applied when testing control structures like "Do-While" loops or "Case" statements. It ensures that all potential decision paths within these structures are executed during the test.

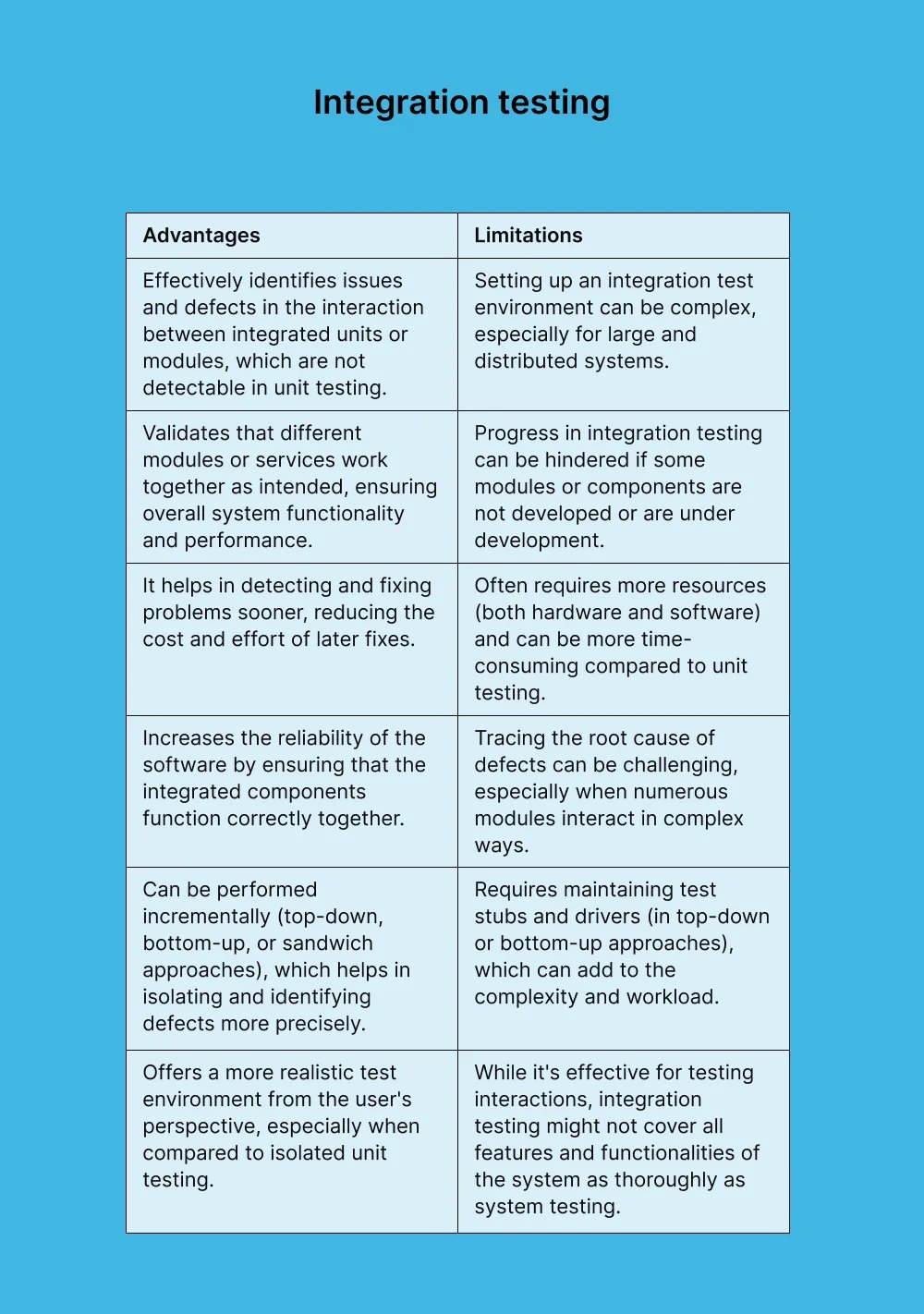

2. Integration Testing

Integration testing is where individual units or components of a software application are combined and tested as a group. The primary purpose of integration testing is to identify and address issues related to the interaction and integration between these individual parts.

For example, it can include issues like data format mismatches, interface mismatches, faulty communication among components, or failure to handle dependencies properly.

Integration testing usually follows unit testing (where individual components are tested in isolation). After units are individually tested, they are gradually aggregated and tested together in a process that can be incremental or all at once, depending on the testing strategy.

Strategies:

1. Big Bang approach: All components or modules are integrated simultaneously and then tested as a whole. This approach is more straightforward but can lead to challenges in identifying the root cause of failures.

2. Incremental approach: Integration and testing are done step by step. It includes:

- Top-down integration: Integration testing begins from the top of the module hierarchy and progresses downwards.

- Bottom-up integration: Starts integrating from the lowest level modules and moves upwards.

- Hybrid integration (Sandwich): Combines both top-down and bottom-up approaches.

Various tools are used to facilitate integration testing. These might automate the process, simulate components (stubs and drivers), or help manage test cases.

It's considered more comprehensive than unit testing but less so than system testing. Integration testing focuses on the architectural structure of the application.

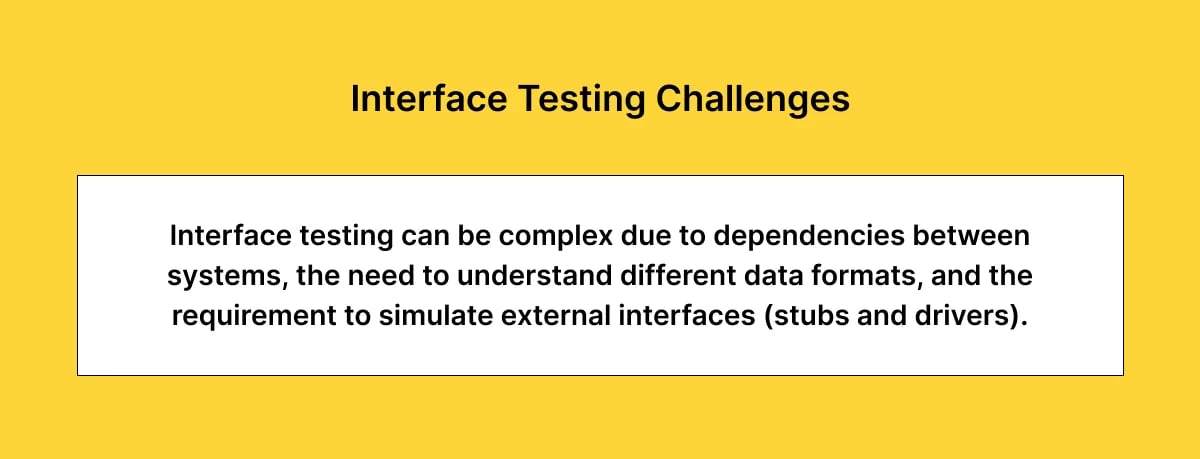

3. Interface testing

Interface testing verifies the interactions and data exchanges between different components or systems. It's specifically concerned with ensuring that interfaces between software modules, systems, or services function correctly according to specifications and expected behavior.

Components tested:

- User interfaces: Ensuring that the graphical user interface meets all specifications and is user-friendly.

- Software interfaces: Testing the interactions between different software modules, like APIs (Application Programming Interfaces).

- Hardware interfaces: Verifying the communication between the software and the hardware components.

- Network interfaces: Assessing the data exchange over network connections, including protocols and network configurations.

Types of Interface testing:

- API testing: Involves testing application programming interfaces directly and as part of integration testing.

- Message queue testing: Involves systems that communicate through message queues, ensuring messages are sent and received correctly.

- File transfer testing: Ensures files are properly transferred (uploaded/downloaded) between systems or modules.

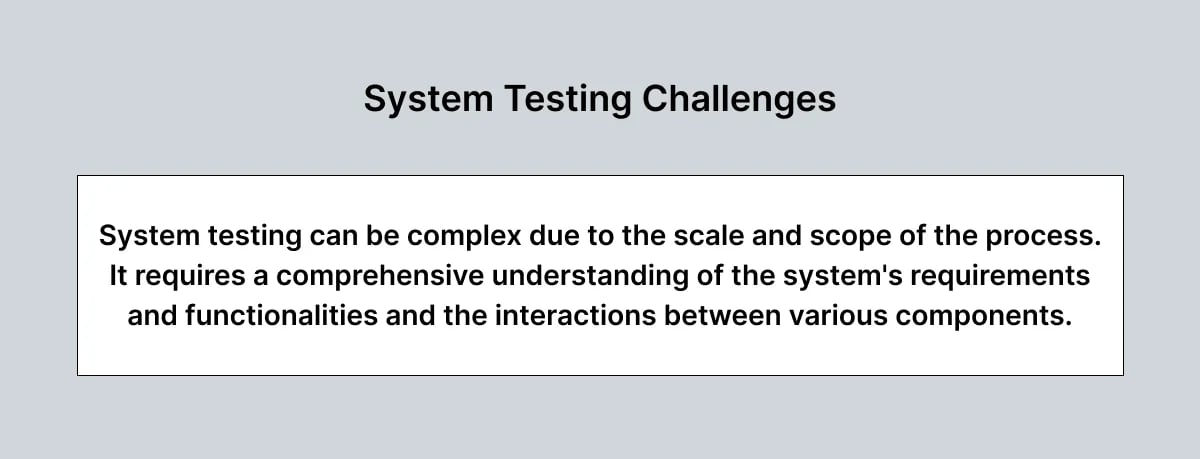

4. System Testing

System testing is a high-level software testing process where a complete and integrated software system is evaluated to ensure that it meets specified requirements. This type of testing is conducted after integration testing, where individual modules or components of the software are combined and tested as a group.

System testing typically employs a black-box testing approach, where the tester does not need to know the internal workings of the software. The focus is on input and output and the system's functionality. It is usually performed in an environment that resembles the production environment to simulate real-world conditions as closely as possible.

Types of System testing:

- Functional testing: Verifies that the software performs all its intended functions correctly.

- Non-functional testing: Includes testing for performance, reliability, scalability, usability, security, and compatibility with other systems or platforms.

- Regression testing: Ensures that new changes or enhancements haven't adversely affected existing functionalities.

- Load and Stress testing: Tests the system's behavior under normal and peak conditions.

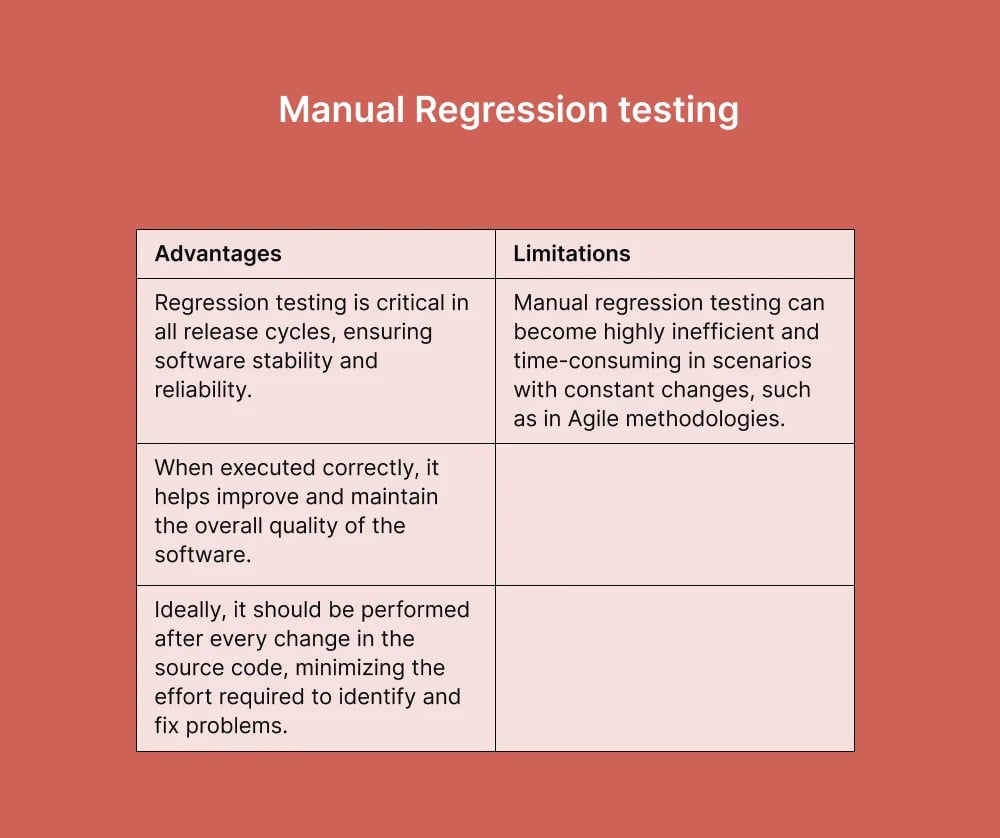

4.1. Manual Regression Testing

Manual regression testing is a method of verification that is performed manually. It confirms that a recent update, bug fix, or code change to a software product or web application has not adversely affected existing features. It utilizes all or some of the already executed test cases, which are re-executed to ensure existing functionality works correctly and no new bugs have been introduced.

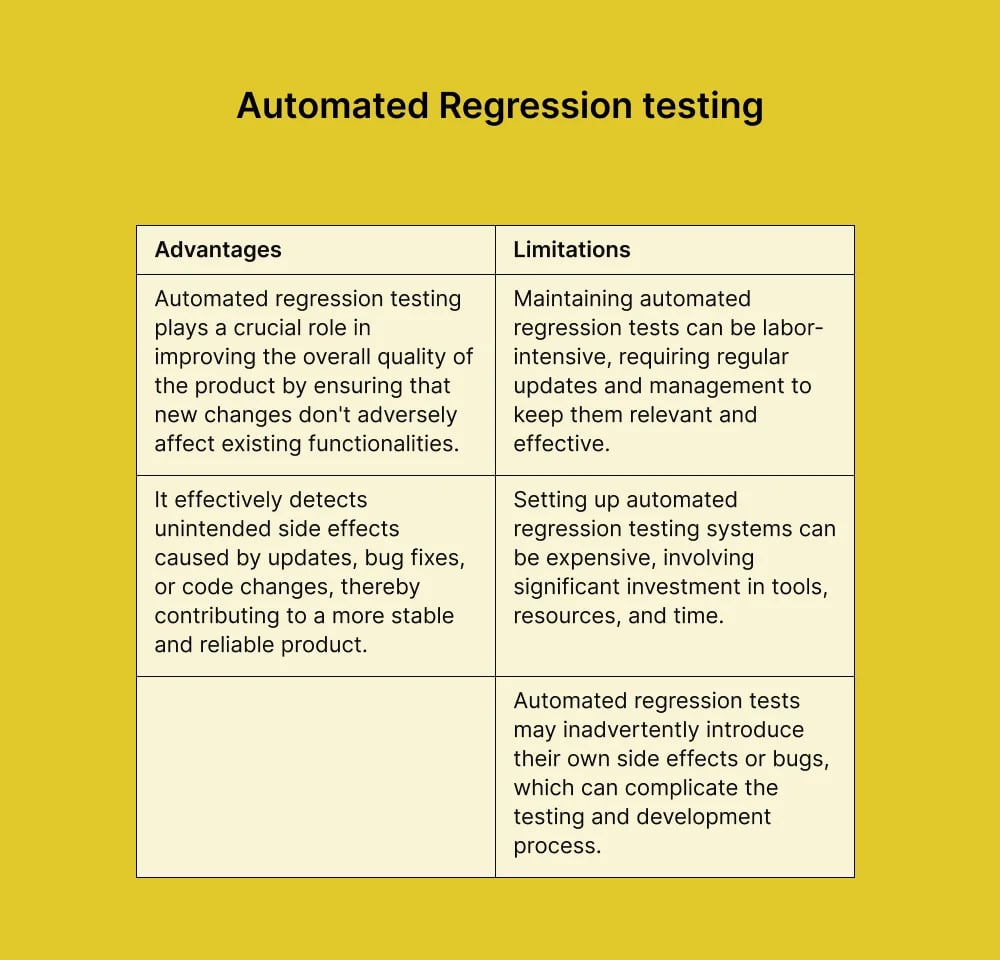

4.2. Automated Regression Testing

By nature, regression testing requires constant repetition. It can be performed manually or using an automated method. The definition is the same as manual regression testing; it's a verification method that is automated rather than performed manually.

5. Smoke Testing

Smoke testing, often called "Build Verification Testing," is a type of software testing performed on a preliminary build to ascertain whether the most crucial functionalities of the application work correctly.

Smoke testing covers the most important functions of the application but does not delve deeply into the finer details. It's a surface-level test that checks basic functionalities like launching the app, simple user interactions, and the stability of the primary features. It's usually conducted using a black box testing approach, where the testers are not concerned with the internal code structure but rather with the basic functionality. Smoke tests are typically quick to run and are often used every time a new build is created, making it a regular part of the Continuous Integration and Continuous Deployment (CI/CD) pipeline.

Smoke testing can be done manually but is often automated to speed up the process, especially in agile development environments where builds are frequent.

It acts as a gatekeeper, ensuring a build is stable and functional enough to warrant further testing. If a build fails the smoke test, it is typically sent back for fixing before additional testing is done, saving time and resources.

6. Sanity Testing

Sanity testing is an important step in the software testing process, especially in Agile and iterative development environments. It helps quickly identify any key functionalities problems after a minor change, ensuring that the software is stable enough for further detailed testing.

This testing focuses on quickly evaluating the functionality to determine whether it is rational (or "sane") to proceed with further, more rigorous testing.

Sanity testing is usually unscripted, focusing on particular functionalities rather than systematic test cases. It is typically a subset of regression testing. It is performed after regression testing but before full-scale testing of the application. It's usually carried out manually due to its focused and rapid nature.

7. Acceptance Testing

Acceptance testing is a critical phase in the software development lifecycle, primarily focused on determining whether the software meets the end user's needs and requirements. It's the final testing stage before the software is deployed. The client or end-users typically conduct this type of testing, sometimes called User Acceptance Testing (UAT).

Types of Acceptance Testing:

- User Acceptance Testing (UAT): Conducted by the end-users to ensure the software meets their needs and can handle required tasks in real-world scenarios.

- Alpha testing: Performed by internal staff before it is released to external testers.

- Beta testing: Conducted by a limited number of actual users in their real environment.

- Contract acceptance testing: Ensures the software meets the contractual requirements.

- Regulation acceptance testing: Verifies that the software complies with regulations and standards.

Feedback from acceptance testing is crucial as it may lead to changes or enhancements in the software. The approval from this phase is often considered the green light for software deployment.

Non-functional testing types

The importance of non-functional testing is on par with functional testing, as it plays a significant role in ensuring client satisfaction. While functional testing assesses what the software does, non-functional testing evaluates how well the software performs, contributing to the overall user experience and application quality. The following are the most common types:

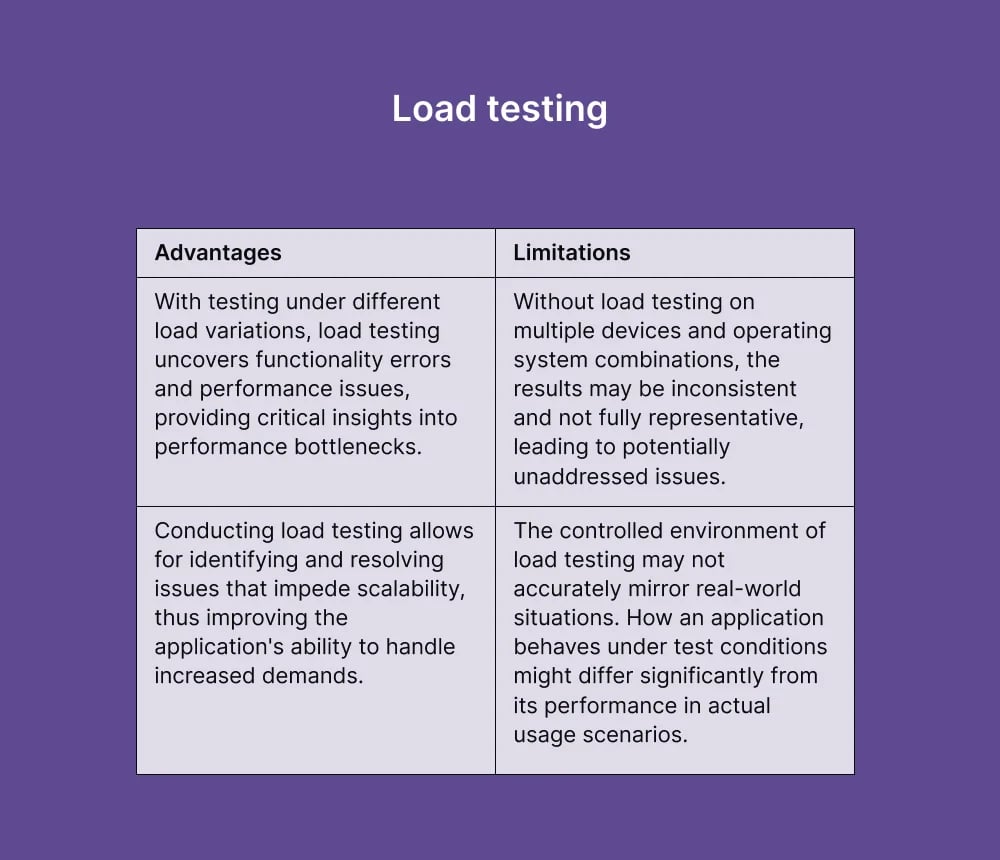

1. Load Testing

Once the development cycle is nearly complete, load testing is carried out to check how an application behaves under the actual demands of the end users. Load testing is usually performed using automated testing tools that simulate real-world usage. It intends to find issues that prevent software from performing under heavy workloads.

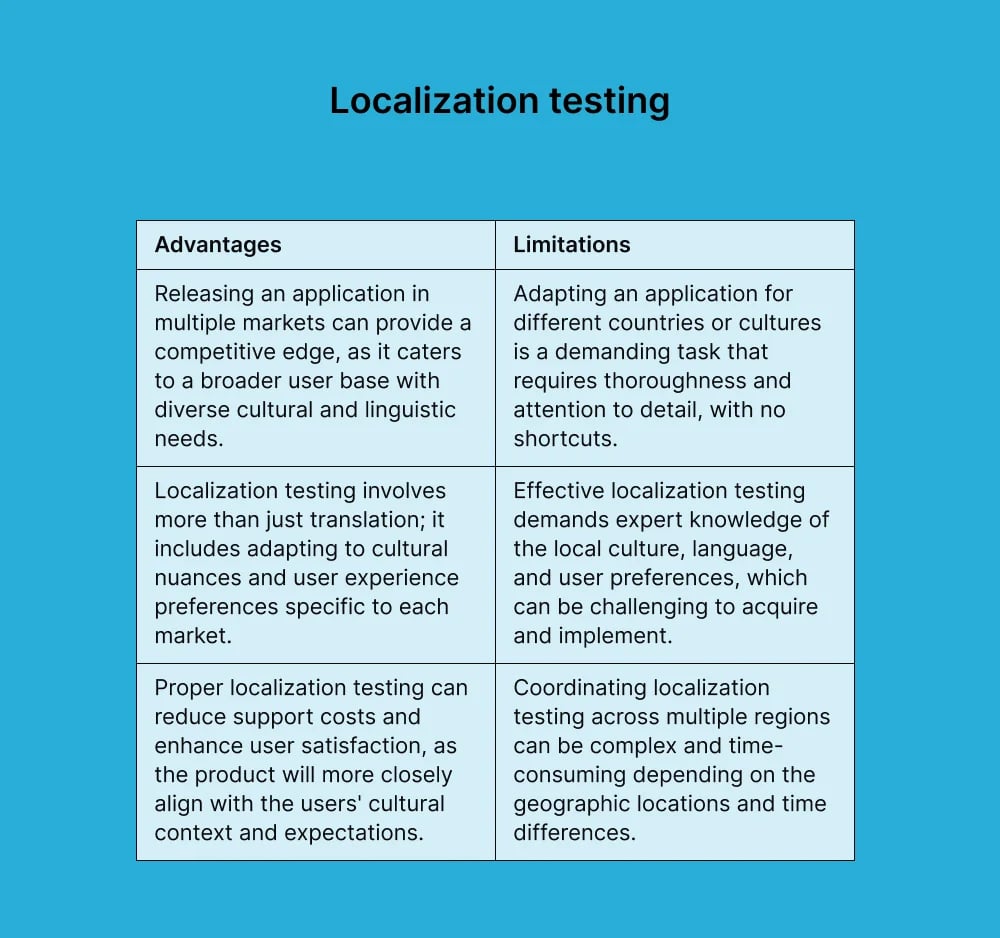

2. Localization Testing

Localization testing checks the quality of a localized version of an application for a particular culture or locale. When an application is customized for a foreign country or presented in a different language, localization testing ensures accuracy. It predominantly tests in three areas: linguistic, cosmetic, and functional.

Does the translation negatively affect a brand or messaging? Do the changes create any alignment or spacing problems for the user interface? Is functionality affected by regional preferences?

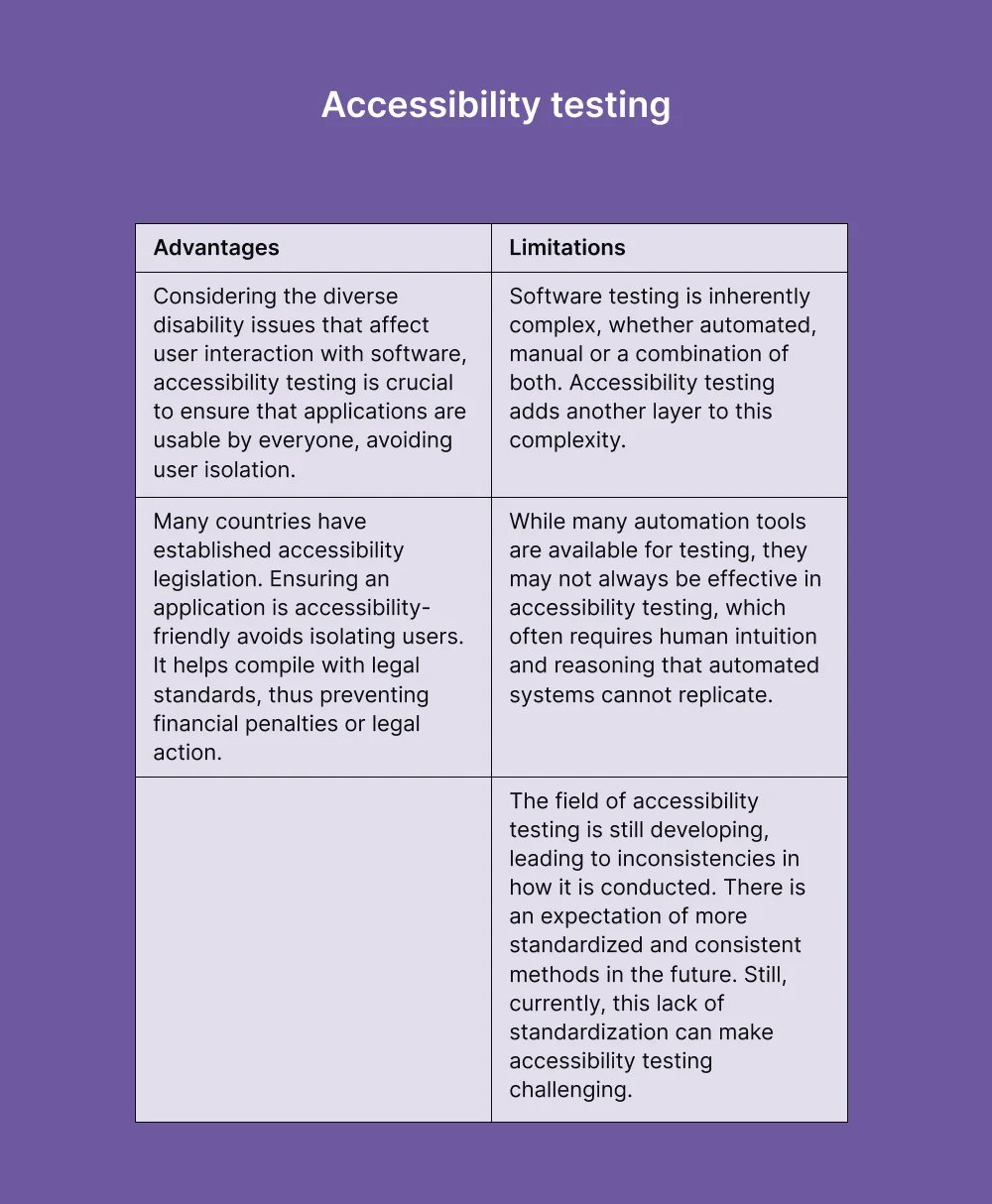

3. Accessibility Testing

Accessibility testing is performed to ensure that an application is usable for people with disabilities. This includes visual impairments, color blindness, poor motor skills, learning difficulties, literacy difficulties, deafness, and hearing impairments. For example, website accessibility can be measured by using W3C (known as Web Content Accessibility Guidelines or WCAG).

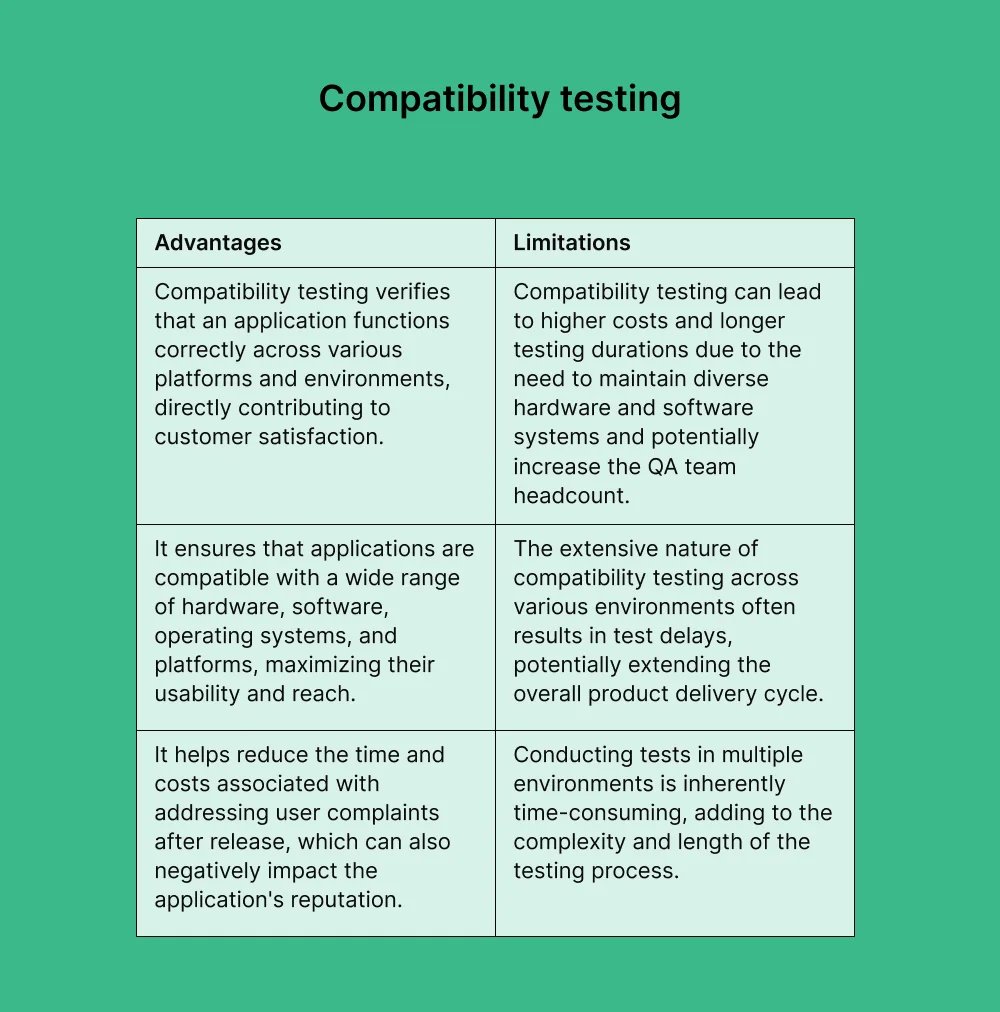

4. Compatibility Testing

Compatibility testing validates whether an application can run on different environments, including hardware, network, operating system, and other software.

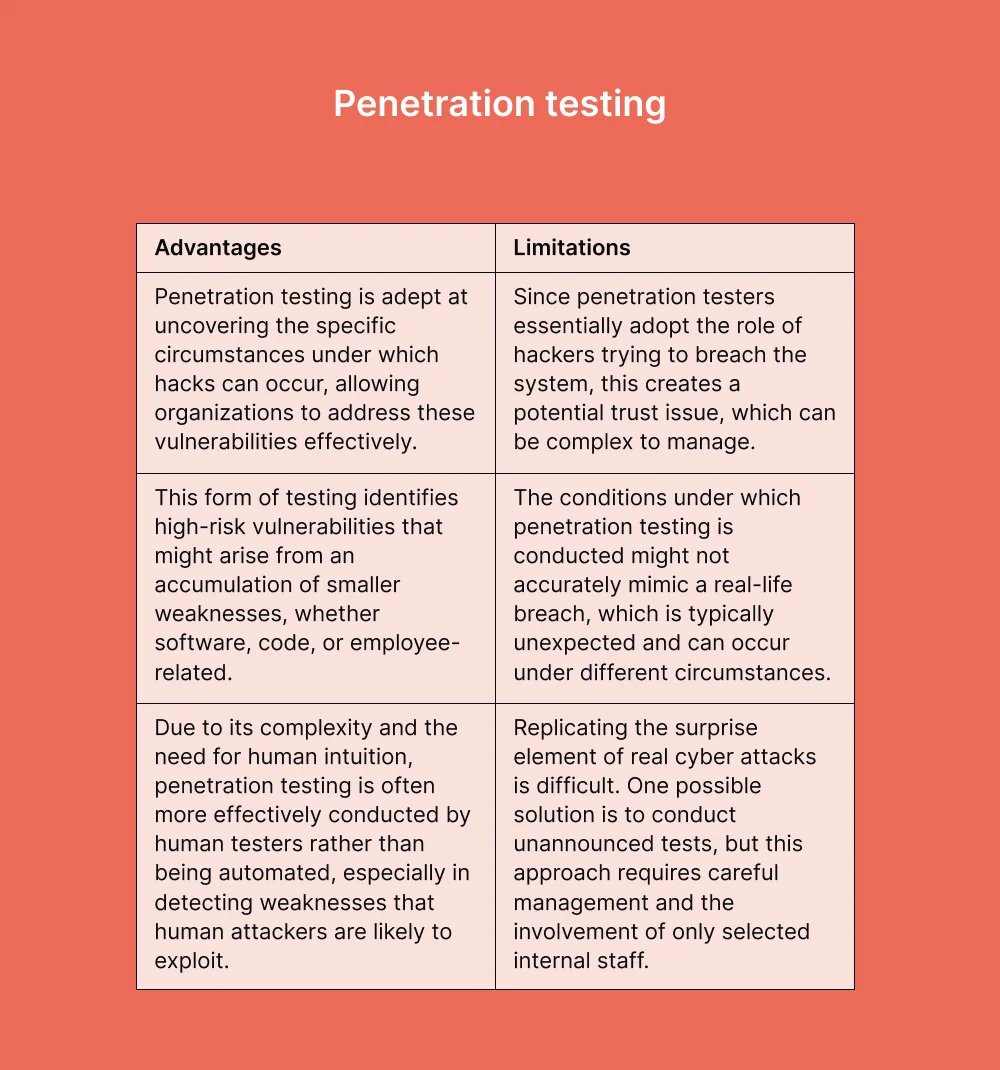

5. Penetration Testing

Penetration testing (or pen testing) is a type of security testing. It is done to test how secure an application and its environments (hardware, operating system, network, etc.) are when subject to attack by an external or internal intruder. An intruder is defined as a hacker or malicious program. Penetration tests either force an attack or do so by using a weakness to gain access to an application. It uses the same methods and tools that a hacker would use. Still, the intention is to identify vulnerabilities so they can be fixed before a real hacker or malicious program exploits them.

The ‘’in-between’’ testing types

Several testing types in software development blur the lines between functional and non-functional testing, addressing aspects of both. These "in-between" testing types include:

1. Usability Testing

While primarily a non-functional aspect focusing on user experience and interface design, usability testing can also encompass functional elements. It ensures that the software is user-friendly and that functional aspects like navigation and data input work seamlessly.

2. Compatibility Testing

This type of testing checks both functional and non-functional aspects. Functionally, it ensures that the software operates as expected across different browsers, operating systems, and devices. Non-functionally, it addresses user experience in different environments.

3. Regression Testing

It is typically seen as a functional testing type, ensuring that new code changes don't adversely affect existing functionalities. However, it can also cover non-functional aspects if these are impacted by code changes, such as performance or usability issues.

4. Performance Testing

Primarily non-functional, focusing on how the software performs under various conditions. However, it can include functional elements, like ensuring that functionalities work correctly under load or stress conditions.

5. Security Testing

It is often categorized as non-functional due to its focus on the system's robustness, resilience, and secure configuration. However, it also includes functional aspects, such as verifying the functionality of access control mechanisms, authentication processes, etc.

6. Exploratory Testing

It is predominantly a manual testing approach where testers explore the application without predefined test cases. While it can focus on functional aspects, it often uncovers non-functional issues like usability, performance, and reliability concerns.

7. API Testing

It involves testing application programming interfaces (APIs) both functionally (ensuring they return the correct data, error handling, etc.) and non-functionally (testing performance and security aspects of the API).

8. End-to-End Testing

Simulates real user scenarios, thereby covering functional aspects of the application. It also assesses non-functional aspects like system performance and user experience.

9. GUI Testing

GUI (Graphical User Interface) testing can be categorized as both functional and non-functional testing, depending on the aspects being tested:

Functional aspects:

- When GUI testing focuses on testing the functionality of the interface elements, it is considered functional testing. For example, checking if buttons perform their intended actions when clicked, if menus open correctly, or if input fields accept and process data appropriately.

- Functional GUI testing ensures that every interactive element works as expected to fulfill its designated task.

Non-Functional aspects:

- GUI testing also covers non-functional aspects such as usability, layout, consistency, and aesthetic appeal of the interface. It includes assessing the layout, color schemes, font sizes, and overall design consistency.

- Non-functional GUI testing is crucial for user satisfaction, accessibility, and overall perception of the software.

Conclusion

No single testing method addresses all software requirements. Leading organizations employ a mix of testing strategies at various stages of development for optimal outcomes, a concept central to QAOps. Much like using top-tier golf clubs effectively, software testing demands knowledge of appropriate utilization.

Software testing, pivotal in the development lifecycle, ensures applications perform as expected and meet user needs. This involves thorough testing to identify flaws, confirm functionality, and adhere to specific requirements. Innovations in testing methods and tools, particularly those used by Global App Testing (GAT), have greatly improved this process.

Key advantages of Global App Testing:

- Diverse Environments: GAT tests across various devices, OS, and networks, closely simulating real user conditions.

- Crowdsourced expertise: Access to a global network of testers enables rapid and extensive testing capabilities.

- Broad coverage: Allows simultaneous testing of different application aspects, such as functionality, usability, and localization.

- Cost efficiency: Outsourcing with GAT is more economical than maintaining a large in-house team, reducing recruitment, training, and infrastructure costs.

- Immediate feedback: Real-time analytics from GAT aid in swift issue resolution and development, hastening time-to-market.

- Flexibility and scalability: GAT's adaptability suits fluctuating testing demands and multiple project management.

- Versatile testing support: Accommodates various test types, including exploratory, regression, and performance testing.

- Global insight: GAT's worldwide tester base of 90,000+ testers offers critical insights for globally relevant applications.

Are you interested in how we can help you deliver superior software products effectively?

Sign up, and let's schedule a call with our specialist today!

Want to drive your global growth in local markets?

We can help you drive global growth, better accessibility and better product quality at every level.

Keep learning

How to create a test plan for software testing

Automated testing vs Manual testing - What's the difference?

The only software testing checklist you need